For the hardest problems – abstract visual reasoning like ARC-AGI-2, research-level mathematics like FrontierMath, or expert-level multi-domain questions like Humanity's Last Exam – the public leaderboard is a reasonable guide: pick the highest-ranked model and you'll probably get the best result.

For easy problems, like text classification or entity extraction, fine-tuning a small model on a few thousand labelled examples often does the trick at a fraction of the cost.

The interesting gap is in the middle: tasks that are genuinely complex, require real domain knowledge and judgement – but aren't so hard that only frontier models can handle them. In practice, running a frontier model on every request at scale isn't sustainable. But it's not obvious whether smaller, cheaper models can hold up on tasks like these.

This article explores how we approached that question at Red Sift – what we measured, how we measured it, and what the results tell us about model selection for tasks like this.

The task: Summarising security assessments

Radar Lite runs automated security checks against domains across three areas: email security, DNS integrity, and web/TLS configuration. The raw output is a structured record of test results – pass, fail, warn, neutral – with evidence and remarks for each finding.

The summariser's job is to translate that into a readable markdown report: surfacing the most important failures, explaining real-world impact, and providing actionable recommendations.

This is an ideal benchmarking task for a few reasons:

- It requires real domain knowledge. DMARC, SPF, DKIM, MTA-STS, DNSSEC, TLS certificate chains – these aren't topics that show up heavily in generic training data. A model that treats DMARC p=none as a passing result, or mistakes a broken DNSSEC chain for a minor warning rather than an outage risk, will produce summaries that actively mislead security teams.

- It's hard, but not extremely hard. The evidence is provided – the model doesn't need to discover anything, just interpret it correctly and write clearly. The ceiling is structured, domain-grounded writing, not open-ended reasoning or mathematical proof.

- Quality is measurable. Accuracy, completeness, structure, clarity – there are concrete, assessable dimensions, which makes consistent scoring possible.

- The cost and latency stakes are real. Radar Lite is a free tool. Running frontier models on every request isn't viable at scale, so the cost-quality tradeoff has direct production consequences.

Building the benchmark

Before running any security checks, Radar Lite parses the user's natural language query to detect two things:

- Intent – which security domain the user is asking about: EMAIL (DMARC, SPF, DKIM, MTA-STS), DNS (DNSSEC, CAA records), WEB (TLS configuration, HTTPS, HSTS), or ANY for a full assessment across all three

- Scope – how many domains are involved and how: SINGLE for one domain, MULTI for several assessed independently, or COMPARE for a side-by-side comparison

Both shape what checks are run and, critically, what the summariser is expected to produce – a COMPARE summary needs to draw contrasts and declare a winner; a MULTI summary needs a separate section per domain. This is what makes the benchmark non-trivial to sample: the task changes shape depending on what the user asked.

We pulled approximately 3,000 real production queries from storage and sampled those down to a 100-entry benchmark dataset. Random sampling would skew toward the most common query type – single-domain, any-intent – so we used stratified sampling to ensure the full range of the summariser's behaviour was covered:

Benchmark sampling distribution

Dimension | Distribution |

Scope | SINGLE 60%, MULTI 20%, COMPARE 20% |

Intent | ANY 40%, WEB 20%, EMAIL 20%, DNS 20% |

The dataset has four key properties:

- Representative – the scope split mirrors production proportions while deliberately over-sampling MULTI and COMPARE, which are structurally harder and more likely to expose model weaknesses

- Diverse – the intent split ensures all three security domains are covered, rather than defaulting to the most common query type

- Unbiased – the domain-uniqueness constraint means each entry is a genuinely independent observation; no single organisation's infrastructure disproportionately influences the results

- Statistically meaningful – at 100 entries, score differences of ~3–5 points are reliably detectable on the normalised 0–1 scale, making it possible to distinguish real model differences from noise

Evaluating quality: LLM-as-judge

Standard automatic metrics like BLEU or ROUGE don't work here. A good summary isn't one that matches a reference string – it's one that is accurate, well-structured, and actionable. We used an LLM-as-a-judge approach, but there are a few variations to consider.

- Pairwise comparison – asking a judge model to pick the better of two outputs – is popular, but with a large number of model configurations and 100 entries each, you'd need thousands of comparisons just to rank them. And even then, you'd know which model won without knowing why.

- Direct scoring avoids the scaling problem, but the judge effectively invents its own rubric each time, which produces inconsistent results across runs.

- A structured rubric addresses both: the criteria are fixed up front, so the judge has no room to drift, and the per-criterion scores show exactly where each model falls short. We used 0–2 rather than binary scoring because some criteria have a genuine middle state – partially correct is meaningfully different from right or wrong.

We ended up with a 13-criterion rubric grounded in Radar Lite's output requirements:

- Factual accuracy – no hallucinated issues, all failures reported

- Language and tone – no leaked internal jargon, correct formatting, professional register

- Severity and structure – correct emoji usage, header levels, issue grouping

- Depth – root cause specificity, actionable recommendations

- Domain correctness – MX-null suppression rules (e.g. don't flag missing MTA-STS on a domain with no mail), scope-specific formatting for multi-domain queries

Each criterion is scored 0–2 for a maximum of 26 raw points, normalised to 0–1.

Before using the judge at scale, we validated it. Three candidate judge models were each run 5 independent times on the same entries, and we measured per-criterion consistency – how often the same model gave the same score for the same input across independent runs. GPT-5.4 with low reasoning effort came out on top: 11 out of 13 criteria produced identical scores across all 5 runs, and per-criterion averages across runs ranged from 0.81 to 0.88 – a narrow spread that indicates stable calibration. It was selected as the judge for the full benchmark run.

The models

We evaluated models from both commercial providers and open-source releases:

- OpenAI: GPT-5, GPT-5 mini, GPT-5 nano

- Anthropic: Claude Sonnet 4.6, Claude Haiku 4.5

- Google: Gemini 3.1 Pro, Gemini 3 Flash, Gemini 3.1 Flash Lite, Gemma 4 26B (via Vertex AI)

- Self-hosted open-source: Qwen3.5 122B, Qwen3.6 35B, Gemma 4 26B

Gemma 4 appears in both lists – it was run on our own infrastructure and via Vertex AI, to verify that our setup produces comparable results to the managed provider. Several models were tested both with and without extended reasoning, to understand whether the added latency and token cost produces meaningfully better summaries.

What we found

We ran each configuration against the full 100-entry dataset, with GPT-5 as the judge. Here is what came back.

Frontier models lead – but not by as much as you would expect

Gemini 3.1 Pro and Claude Sonnet 4.6 sit at the top at 0.93 and 0.92. But they aren't in a different league from the models below them. Several mid-tier models – most with some form of reasoning enabled – cluster between 0.88 and 0.90, all within 0.05 of the best result: GPT-5 low reasoning, Gemma 4 26B extended reasoning and Gemini 3 Flash low reasoning.

The best cost-performance options

Gemini 3 Flash produced the best overall balance in our results: 0.88 score, 7-second latency, $0.76 for 100 queries – more than 5× cheaper and more than twice as fast as Gemini 3.1 Pro. The only faster model is GPT-5 nano without reasoning, which scores significantly lower at 0.58.

Gemma 4 on Vertex AI is the most cost-efficient viable option at $0.17 total, with a 0.86 score and 13-second latency. It sits just below Gemini 3 Flash in quality but at less than a quarter of the cost. For high-volume applications, that difference compounds fast.

GPT-5 mini with low reasoning is also worth noting: 0.87 at $0.46, matching the score of the full GPT-5 model running without reasoning ($1.70) – same quality, quarter of the cost.

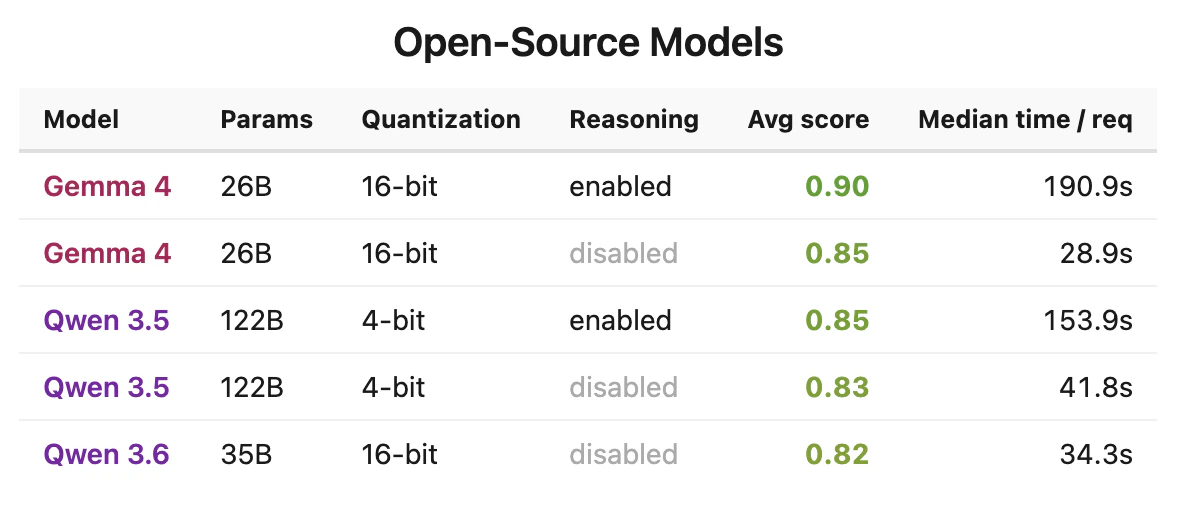

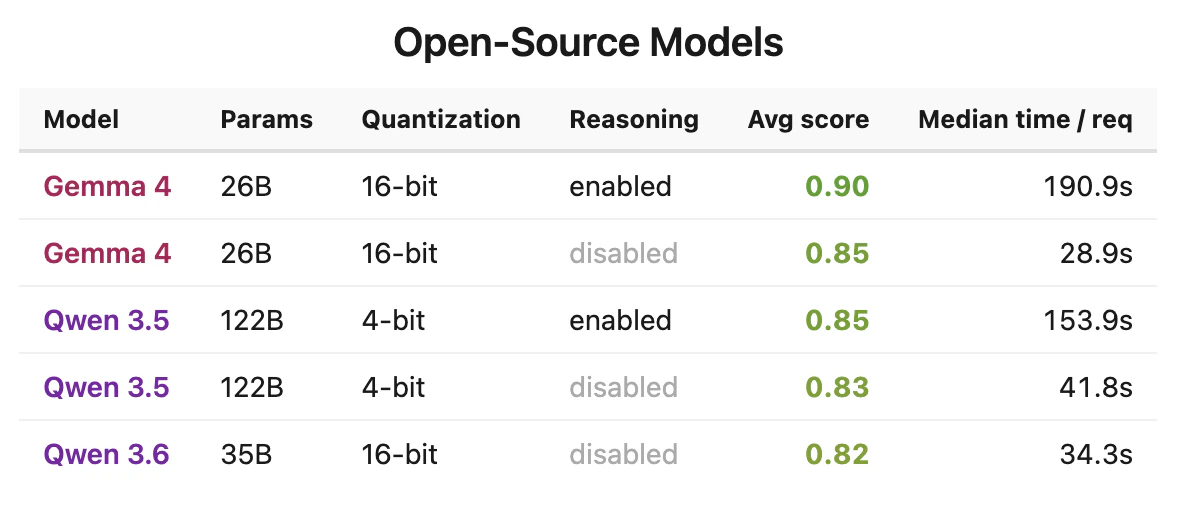

Self-hosted Gemma matches the managed API

We run Gemma 4 on our own infrastructure as well as through Vertex AI. The results are essentially identical across both reasoning modes – 0.85 (self-hosted) vs 0.86 (Vertex AI) without reasoning, and 0.90 vs 0.89 with reasoning enabled. Total output tokens across 100 queries without reasoning are also closely matched: 51,223 (self-hosted) vs 52,405 (Vertex AI). For teams running open models in production, this kind of cross-check matters: when self-hosted output aligns with the managed API on both quality and verbosity, you can be confident that your deployment is correctly configured and that benchmark results transfer across environments.

Reasoning helps, but the gains are modest

Across models where both modes were tested, extended or low reasoning typically adds 0.02–0.03 to the score:

- GPT-5: 0.89 (low) vs 0.87 (none)

- Claude Haiku 4.5: 0.86 (extended) vs 0.83 (none)

- Qwen3.5 122B: 0.85 (extended) vs 0.83 (none)

Whether that improvement justifies the latency and cost depends on your situation. For a security summary – where the task is structured writing from provided evidence rather than multi-step reasoning – the gains are real but not transformative.

The clear exception is GPT-5 nano: without reasoning it scores 0.58, the lowest in the entire benchmark and the only model that regularly produced incomplete or poorly structured summaries. With low reasoning effort it jumps to 0.72. For smaller models with lower inherent capability, reasoning may do more of the heavy lifting.

Takeaways

Running this evaluation changed how we think about model selection for production tasks in the "challenging but not frontier-hard" range:

- General leaderboards test breadth, not depth. A model's rank on general benchmarks doesn't predict how it performs on a specific domain task. The only way to know is to benchmark on your actual workload with your actual data.

- Reasoning is a tradeoff, not a free upgrade. For most models it adds 0.02–0.03 to the score at the cost of significant latency. Whether that's worth it depends on your task – for structured writing grounded in provided evidence, the gains are real but modest.

- Benchmark on production data, not synthetic samples. Stratified sampling from real queries exposed the full range of task types that a benchmark on synthetic data would have missed. The model you pick may behave very differently on the tail of your distribution.

- Self-hosting is viable if you validate it. Open-source models running on your own infrastructure can match managed API quality – but only if you verify it. Score, output length, and reasoning behaviour should all align before you deploy it in production.

If you want to see the kind of summaries these models are generating, Radar Lite is free to use – just enter a domain and ask a question.

Appendix

Result table

LLM Benchmark Results

Model | Reasoning | Score | Avg latency | Total cost |

Gemini 3.1 Pro | low | 0.93 | 17s | $4.07 |

Claude Sonnet 4.6 | low | 0.92 | 19s | $4.44 |

Gemma 4 26B (self-hosted) | on | 0.90 | 250s | self-hosted |

GPT-5 | low | 0.89 | 38s | $2.59 |

Gemma 4 26B (Vertex AI) | on | 0.89 | 48s | $0.39 |

Gemini 3 Flash | low | 0.88 | 7s | $0.76 |

GPT-5 | off | 0.87 | 18s | $1.70 |

GPT-5 mini | low | 0.87 | 29s | $0.46 |

Claude Haiku 4.5 | on | 0.86 | 21s | $2.20 |

Gemma 4 26B (Vertex AI) | off | 0.86 | 13s | $0.17 |

Gemini 3.1 Flash Lite | low | 0.85 | 7s | $0.48 |

Gemma 4 26B (self-hosted) | off | 0.85 | 31s | self-hosted |

Qwen3.5 122B | on | 0.85 | 186s | self-hosted |

GPT-5 mini | off | 0.84 | 24s | $0.37 |

Claude Haiku 4.5 | off | 0.83 | 12s | $1.63 |

Qwen3.5 122B | off | 0.83 | 45s | self-hosted |

Qwen3.6 35B | off | 0.82 | 36s | self-hosted |

GPT-5 nano | low | 0.72 | 17s | $0.56 |

GPT-5 nano | off | 0.58 | 8s | $0.24 |

Phong's work explores a number of projects focused on NLP, anomaly detection, active learning and visualisation. He is a most known for his work behind Red Sift Radar.